Kafka Connector

Before we explore the Kafka connector – One of the many application connectors provided by Boomi, we should know what it is.

What is Kafka?

Kafka is an open-source distributed event store and stream-processing platform that helps build real-time streaming data pipelines and applications that adapt to the data streams.

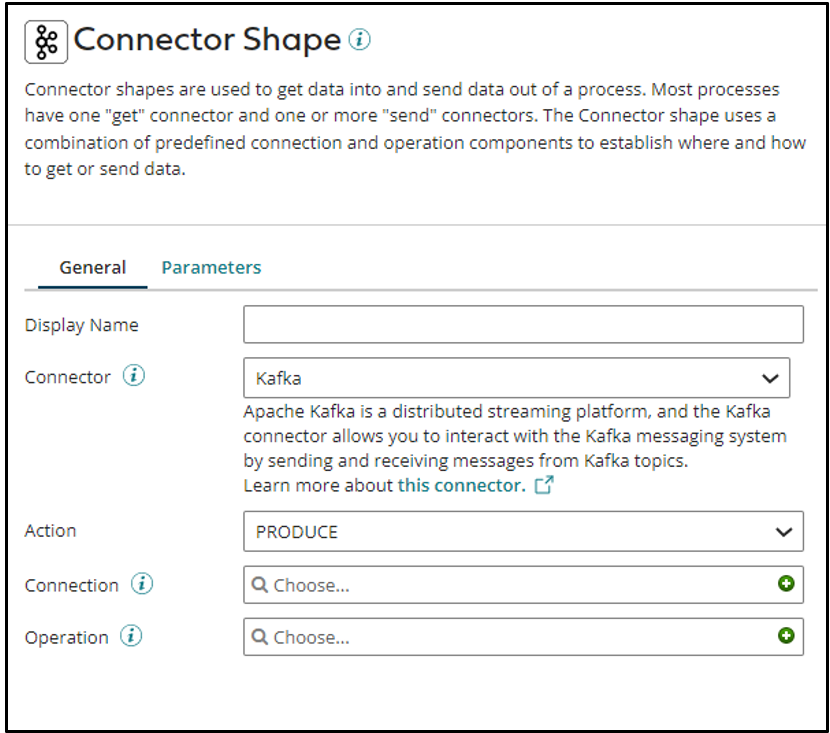

Coming back to Kafka Connector, as already mentioned, it’s an application connector provided by Boomi to connect to and exchange data from the Kafka platform. Using this connector, you can directly connect with the Kafka platform, browse the interfaces in real time, and exchange Kafka data with any cloud or on-premises platform.

Like every other connector in Boomi, Kafka Connector also requires the Kafka Connection and Kafka Operation components to connect to the Kafka platform.

Kafka Connection:

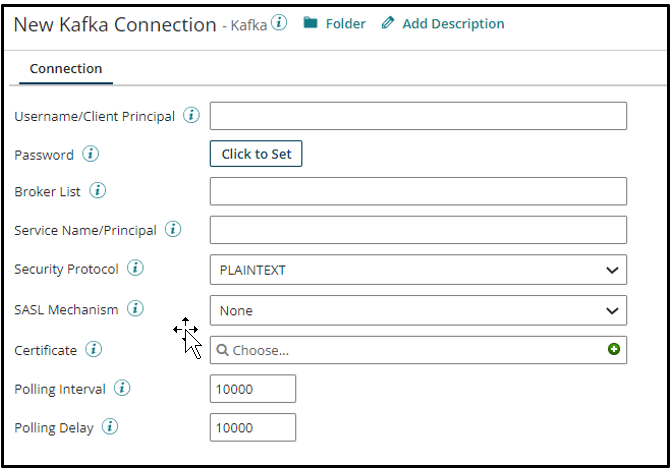

Stores the essential details required for establishing the Boomi-Kafka connectivity.

- Name: One can set the desired name for the connection in this field.

- Username/Client Principal: Stores the username to log into the Kafka account.

- Password: Stores the password of the Kafka account used to log onto the Kafka platform.

- Broker List: Stores the host and port pairs list, separated by commas.

- Service Name/Principal: Stores the passphrase for the private key.

- Security Protocol: Kafka provides 4 types of security protocol plain text, SSL, SASL plaintext, and SASL SSL.

- SASL Mechanism: Allows to set one of the given SASL mechanisms.

- Certificate: Stores a private certificate to authenticate the SSL certificate.

- Polling Interval: Specify the time interval at which the connector’s Listen operation polls the data from the server.

- Polling Delay: Specify the time interval the connector waits before starting to poll the data.

Kafka Operation:

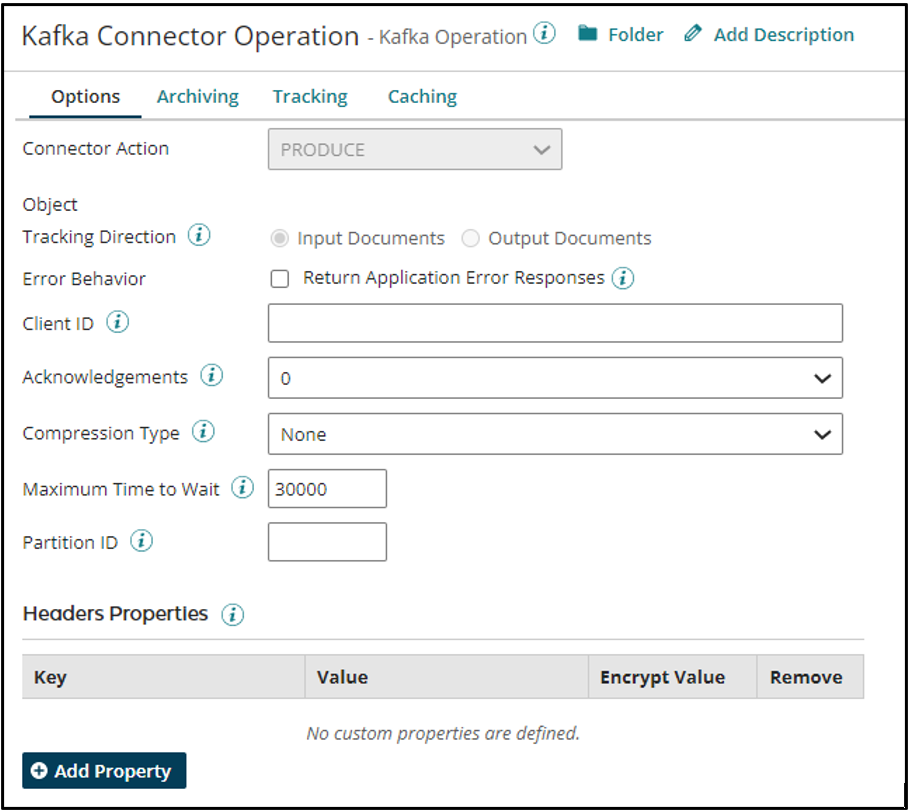

Defines the action that needs to be performed on a specific Kafka object using the Kafka Connection.

- Name: One can set the desired operation name in this field.

- Object: The Kafka Object against which you want to perform the said action.

- Request Profile: Defines the schema/structure of the request sent to the Snowflake

- Response Profile: Defines the schema/structure of the response received by the Snowflake

- Tracking Direction: This enables you to choose whether to track the input or output document and display the same in process reporting.

- Client ID: reporting Enter the unique ID used to identify the client application in Kafka.

- Acknowledgements: Select the number of acknowledgments the Kafka server must receive before considering the request as complete.

- Compression Type: Select the format used to compress and send messages to the Kafka server.

- Maximum Time to Wait: The time to wait for the messages to publish before failing with a timeout error.

- Partition ID: reporting Enter the partition ID where the message is to be stored.

Cover Photo by Claudio Schwarz on Unsplash